Distortion and Sound Quality --- What is Reasonable?

Introduction

In this blog post I would like to discuss distortion levels in loudspeakers in the context of high end audio (HEA). What are reasonable levels of distortion and how do we measure it?

Setting a target

For the sake of discussion let us randomly select a target distortion level of 1%. We will see later if this is reasonable. We also need to define our target SPL level. Let's select something normal for HEA which is 90dB SPL at the listening position. However we need to establish a room size and so let's select an average room size of 12' wide x 16' deep (3.6m x 4.8m) or 192 sq. ft. (17.2 sq. m). This room size would mean a typical listening distance of 9 feet (2.7m). This establishes the required SPL output level for each speaker which ends up being 96dB SPL at 1m.

To summarize we have the following target specification:

- Maxiumum SPL 96dB with a distortion threshold of 1%.

Let's add some additional parameters to further define our goals.

- Distortion threshold for midrange and treble frequencies only

Let's assume that we are reproducing high quality music content that has a reasonable amount of dynamic range. An example of a good recording is Steely Dan's catalogue which has great PRAT (pace, rhythm, and timing), a non-technical term used by audiophiles to express good transients. In more technical terms this is referred to as the crest factor. Steely Dan's music contains transient peaks in excess of 12dB from the mean square root average (rms) of the soundtrack. I will discuss this later but for now let us add this requirement to our specification list:

- Crest factor +12dB

Measuring Distortion

Below is a intermodulation distortion result for a compression driver I tested recently. This driver represents the distortion profile for an average small format compression driver, but I will not name the specific model.

The noise floor is -58dB down at a SPL level of 95dB SPL at 1m. This exceeds our performance target of 1% distortion which is -40dB. So we've exceeded our performance target by +18dB.

Not so fast...

The decibel meter I used to set the test signal level from the loudspeaker uses the root mean square (RMS) average. This does not account for the crest factor typically seen in music and sound. So we can kiss that +18dB of headroom goodbye. Some drum percussions can easily exceed 18dB crest.

To further explain this let us look at what happens to the noise floor when we increase the test SPL another +10dB. Shown below is the intermodulation distortion with the same driver and setup but with the test SPL increased to 105dB.

As you can see the noise floor is reduced to -48dB, the same amount as the SPL increase of +10dB.

General Note on Noise Floor

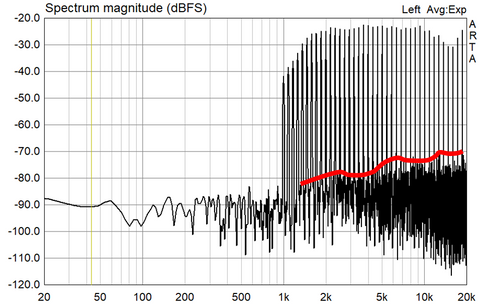

It has been my observation that for any given increase in SPL there is a corresponding reduction in dynamic range. To explain this further, for every +5dB increase in test SPL, there is a corresponding -5dB reduction in dynamic range as defined by the noise floor level against the test signal level. This has only been observed during multitone testing. It has not been consistently observed with a simple harmonic distortion test which uses a single sine tone as the test signal. Only by using a dual tone test signal do I see a consistent result as I incrementally increase the test SPL. Further to that, a 1/9 octave test signal shown in my test produces over 20 test tones simultaneously. This produces increasingly consistent results in terms of distortion rising in a linear fashion as output increases. I also see a more defined noise floor as you can see from the red line shown below, compared to dual tone which can sometimes fail to reveal noise components.

This noise profile is revealed across the frequency spectrum as defined by the red line. This can sometimes help to reveal potential bandwidth limitations of a particular driver. For example a large format compression driver struggles to reproduce the upper treble frequencies. Shown below is a typical large format compression driver using a 3" voice coil diaphragm. While the larger diaphragm area produces the midrange frequencies with ease, it clearly struggles in the upper treble with only -40dB dynamic range at 105dB SPL 1m.

In terms of what constitutes a good target for low distortion, I can only correlate what subjectively sounds good against what I typically measure. The best drivers I've heard always have very low distortion typically in the -65 to -75dB range with an output level of 95dB SPL at 1m distance. This would be the case for compression drivers, and exotic drivers such as electrostatic, planar, and ribbon reproducing midrange and treble frequencies. For bass and mid-bass frequencies it generally depends on driver size. A average 12" woofer will typically achieve -40dB dynamic range at 95dB SPL. Below is the distortion results on a 12" Eton 12-612 woofer for reference.

Conclusion

An over stressed driver will sound fatiguing, harsh, and lack dynamics. These are the factors which contribute to the distortion topic.

- Overall SPL level at the listening position

- Listening distance

- Room size as well as other factors such as enclosed or open to adjacent rooms (not discussed here) and room boundary reinforcement.

- Musical genre (crest factor generally varies with musical genre)

- Mixing and mastering of the recorded soundtrack in terms of dynamic range (ie. loudness wars)

- Acceptable distortion threshold (ie. 1% or -40dB as an example)

Distortion is just one metric that can be used in the overall specification for achieving acceptable sound quality for high end sound. Ignoring this could mean a potential pitfall for your project.